LOS ANGELES, CA / ACCESS Newswire / March 26, 2026 / We at Axis Robotics are building the distributed scaling layer for Physical AI, and today we're announcing that our main product will launch on the Base blockchain on March 25. Following two rounds of large‑scale community testing that generated nearly 300,000 robotic trajectories, we're moving from test phase to global availability. Our team has developed an end‑to‑end system that redefines how data for Physical AI is generated, scaled, and distributed-and we believe the future of embodied intelligence won't be created by a handful of labs, but by broad, worldwide participation. That's why we're betting on simulation data and a globally distributed contributor network as Physical AI approaches a commercial inflection point and as demand for scalable, highly diverse training data accelerates across the industry.

Axis Robotics is pursuing a Simulation‑First strategy to rebuild how data for Physical AI is generated, diversified, and scaled. By 2025, multiple technological vectors in robotics are converging faster than expected. Hardware supply chains for embodied robots are undergoing rapid commoditization, turning what once were expensive prototypes into devices capable of large‑scale real‑world deployment. Vision‑Language‑Action (VLA) models are giving robots semantic understanding, reasoning, and planning-the cognitive "brain" required for general‑purpose behavior. And across the data stack, from video priors to advanced synthetic simulation, a multilayered data pyramid is emerging to fuel the continuous evolution of Physical AI.

Yet one bottleneck remains fundamental: data coverage.

Compared with LLMs and autonomous driving, physical intelligence still faces a significant pre‑training data deficit. The industry is pursuing several parallel paths to bridge this gap: large‑scale teleoperation datasets such as UMI, natural human‑robot interaction via egocentric video, and fast‑advancing synthetic simulation data pipelines. As these sources mature, academia and industry are arriving at a new consensus:

Pretraining on large‑scale, high‑quality simulation data-followed by fine‑tuning on a small set of real‑world demonstrations-is one of the most practical and effective paths forward.

But this consensus raises the bar: simulation data must be high‑quality, low‑cost, and truly scalable. Without this trifecta, training progress will remain constrained by the dual challenges of expensive real‑world data and insufficient synthetic fidelity.

So the question naturally arises:

Is Physical AI's "GPT moment" approaching?

Axis's answer is yes-but only if we reinvent the way robotic data is produced, validated, and deployed at global scale.

Enabling Everyone to Contribute to Physical AI at Scale

Traditional robotic data collection relies on small expert teams or lab‑bound teleoperation setups-expensive, limited in diversity, and inherently unscalable. Axis breaks this paradigm by building an end‑to‑end Physical AI data infrastructure that allows anyone, anywhere, to contribute meaningful data through distributed human participation. Robots will serve humans, but they will also be built and continuously evolved through large‑scale human intelligence.

From day one, Axis understood that "providing data" alone is not enough. Solving the data bottleneck requires a full, vertically integrated pipeline, centered on three core components: task generation, data collection, and data evaluation & processing.

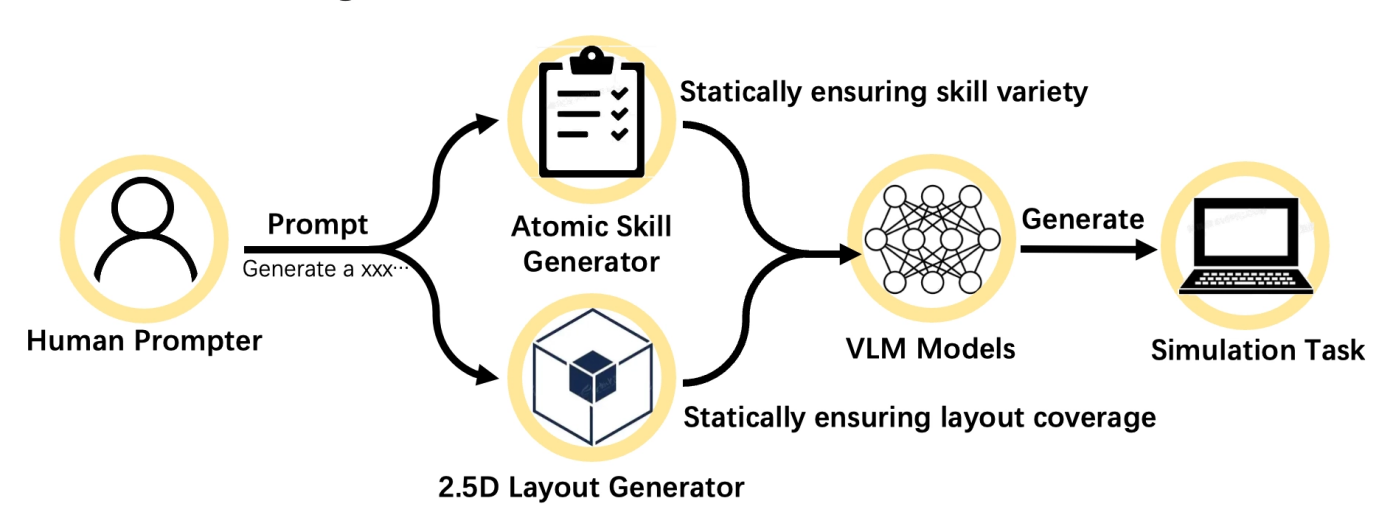

1. Dynamic Task Generation

A next‑generation 3D task engine decomposes robotic capabilities into atomic skills, enabling infinite high‑quality simulation tasks from a single prompt. From simple single‑step behaviors to complex chained tasks, robots can continuously expand their capabilities inside a rich, ever‑evolving task universe. The boundaries of data define the boundaries of robotic capabilities.

(task generation pipeline)

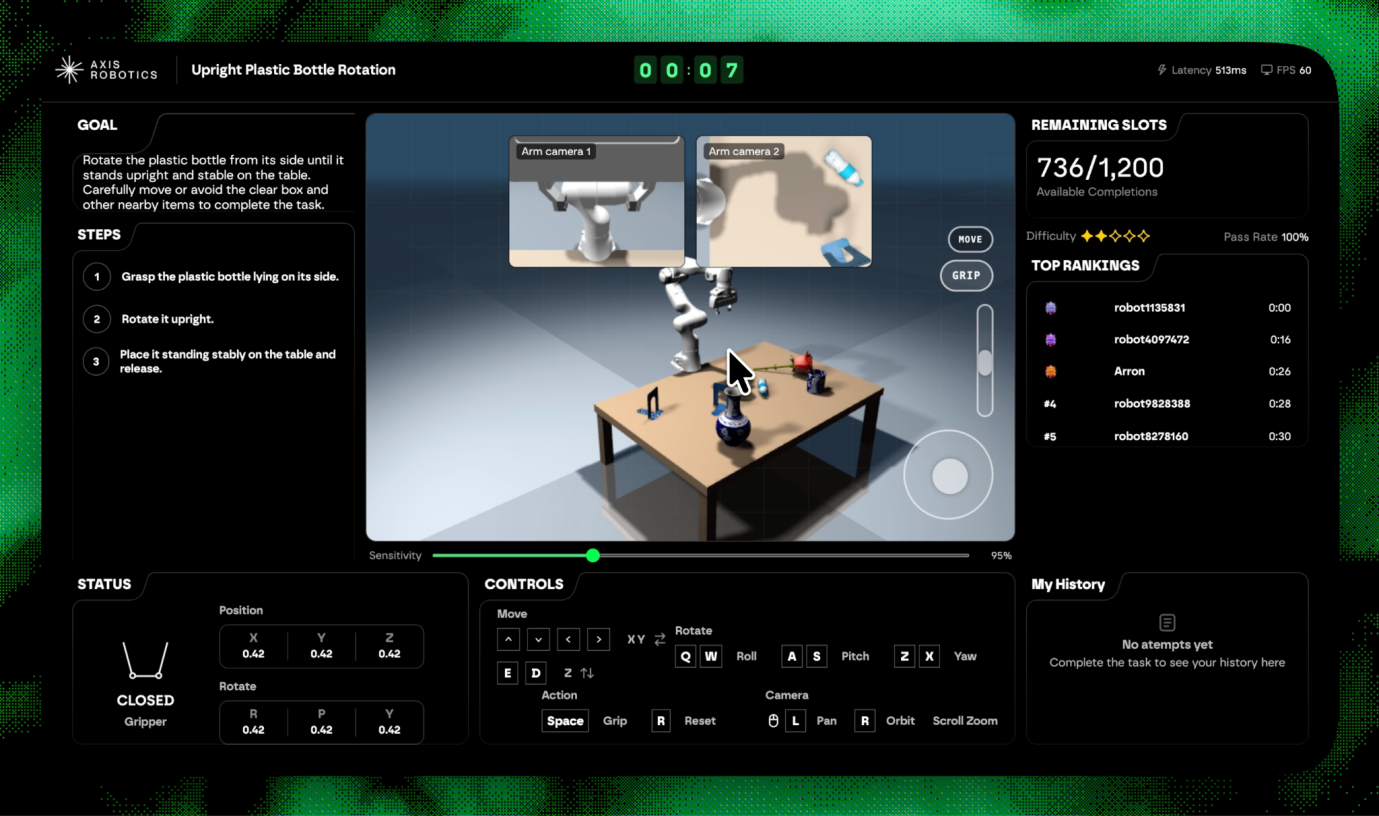

2. Zero‑Barrier Data Collection

Axis brings complex simulation environments-historically restricted to robotics labs-into the browser and onto mobile devices. Users can operate robots in real time directly from a web page, generating high‑value trajectory data as naturally as playing a game. No hardware. No local compute. No expertise required.

3. Data Evaluation & Processing

Every trajectory is automatically replayed, validated, smoothed, and filtered through Axis's evaluation system. It examines completeness, stability, validity, fluidity, and more-producing training-ready data assets at scale, replacing manual curation with systemic automation.

MetaSim: The Core Engine Behind the Pipeline

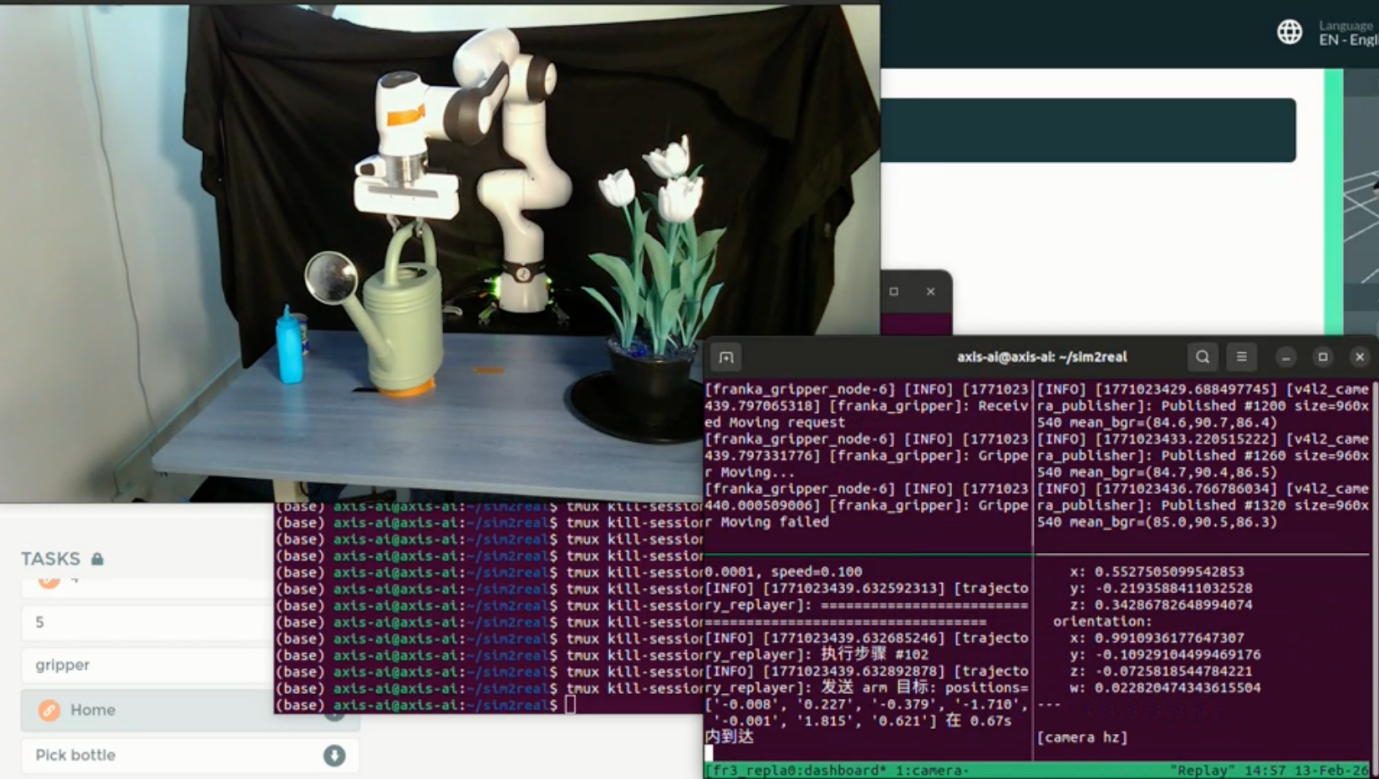

Underneath this user‑facing system lies MetaSim, Axis's unified infrastructure layer designed specifically for Physical AI. It handles simulator decoupling, data verification, and augmentation. Human demonstration data collected via the lightweight web simulator can be seamlessly reproduced in NVIDIA Isaac Sim for high‑fidelity validation.

Leveraging Isaac Sim's physics and rendering engines, Axis performs high‑fidelity reconstruction and large‑scale domain randomization-dramatically amplifying Sim‑to‑Real robustness and overall training value. Each piece of data becomes more generalizable, more useful, and more aligned with real‑world deployment.

(terminal screen recording)

Why Crypto Matters: Scaling Trustless Participation and Incentives

Infrastructure alone is not enough. To enable true global participation, Axis is integrating crypto as the coordination and incentive layer.

Crypto provides:

Transparent, verifiable contribution records

Distributed participation without geographic barriers

Incentive mechanisms aligned with actual data value

New possibilities for data assetization

This is crypto not as a narrative device, but as a practical delivery mechanism-a way to scale human contributions, ensure fairness, and transform data production into an ecosystem rather than a closed pipeline.

Real‑World Validation: From "Little Prince's Rose" to Large‑Scale Community Testing

Axis has already validated the end‑to‑end effectiveness of its data pipeline.

In the "Little Prince's Rose" community event, Axis collected over 10,000 high‑quality trajectories in just three days. After automated replay verification and augmentation, these trajectories were fed directly into training pipelines and successfully deployed on a physical Franka arm-executing a fully autonomous flower-watering task.

This milestone demonstrated Axis's zero‑shot Sim‑to‑Real transfer capability and proved, for the first time, that web‑based crowdsourced simulation can produce training‑grade robotic data.

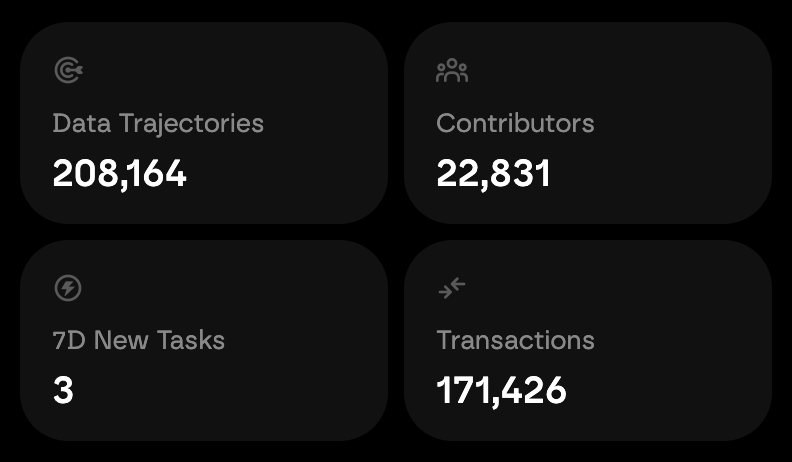

Across two testing rounds totaling 15 days, more than 30,000 users contributed over 180,000 trajectories-all publicly visible on the live data dashboard: https://hub.axisrobotics.ai/

(live data dashboard: https://hub.axisrobotics.ai/)

Two Core Deliverables: High‑Quality Data and an Open, Modular Infrastructure Stack

Axis believes that just as robots will eventually serve every person, every person should have the opportunity to help build the next generation of robots.

This mission rests on two pillars:

1. High‑Quality Pretraining Datasets

Axis aims to set the standard for what qualifies as pretraining‑ready robotic data: diverse tasks, rich scene layouts, multi‑modal structure, and direct usability for foundational models. This is not about producing more data; it is about producing the right data.

2. A Scalable, Open Infrastructure Stack

Beyond data, Axis is building a flexible, modular infrastructure that will gradually open core interfaces across task generation, data collection, data processing, and training. Developers, researchers, companies, and communities will all be able to plug in-turning Physical AI from a closed pipeline into a global collaborative system.

Industry Partnerships and Real‑World Deployment

Axis is partnering with manufacturing companies, robot embodiment companies, and model developers-including Lotus Robotics, Booster Robotic, Zeroth, and Manycore Technology-to build scalable pipelines for data generation, model training, and deployment.

For robot embodiment companies needing large‑scale simulation data, Axis converts their hardware into high‑fidelity sim-ready digital twins, generates sim‑ready environments, and distributes tasks globally through its browser‑based platform. Users collect diverse trajectories at scale, enabling standardized, low‑cost data production and enterprise collaboration.

As hardware costs fall and supply chains mature, industry value is rapidly shifting toward AI models and data infrastructure. Simulation data-enhanced by high‑precision physics and domain randomization-is becoming a core production factor, representing a potential $100Bn‑plus infrastructure category in the trillion‑dollar Physical AI economy.

Axis's globally distributed data network transforms a historically expensive and centralized simulation workflow into an exponentially scalable system with powerful commercial potential.

Looking Ahead: Toward Physical AI's GPT Moment

Physical AI's GPT moment requires a system capable of capturing human intelligence and converting it into reliable, verifiable machine behavior. With its upcoming launch on Base Chain, Axis is deploying the distributed infrastructure designed for this future-a resilient, open network built for global collaboration at scale.

(product overview)

On March 25, we launched our main product to the world.

Users, researchers, developers, and AI labs will be able to join and contribute to what may become the largest and most diverse robotic training dataset ever built.

Physical AI will not be owned by a few. It will be built by all of us.

Company: Axis Robotics

Contact: Christine Sun

Email: christine@axis-labs.ai

Website: https://axisrobotics.ai/

SOURCE: Axis Robotics

View the original press release on ACCESS Newswire